APAN (Asia Pacific Advanced Network) brings together national research and education networks in the Asia Pacific region. APAN holds meetings twice a year to talk about current activities in the regional NREN sector. I was invited to be on a panel at APAN 50 on the subject of Cyber Governance, and I’d like to share my perspective on this topic here.

I’m not sure what “Cyber Governance” actually means!

We’ve conventionally used the term governance to describe the relationship between citizens and the state, or more generally between a social group and its leaders. It’s intended to relate to the processes of decision making that reinforce societal norms and nurture a society’s institutions. Much has been said about the processes of governance, its accountability, its effectiveness and the ways in which it can degenerate and be abused. But I’m still somewhat challenged when I try to apply this governance concept to the vague and insubstantive digital environment.

Maybe we could take a more mechanistic view of governance by looking at its intended outcomes. Thus, we could say that the intended outcome of a governance system is the imposition of a collection of constraints on the various actors. In this sense it is similar to a governor on a motor, for example.

In the context of public telecommunication services, or the cyber world, we could see the outcomes of a governance framework as a set of national legislated or regulated constraints that are applied to service operators. But even this definition is somewhat unsatisfactory. While many national regimes would like to think otherwise there is still a major set of activities that do not clearly sit within national frameworks. Questions relating to the management of the Internet’s naming and addressing infrastructure intersect with national governance mechanisms, but the Internet-wide perspective is larger than the sum of each national perspective. When we leave the realm of nation states it becomes a relevant to ask these governance questions. Who’s in charge? Who appointed these governing bodies? How are decisions made? Where is accountability in this framework?

Those are tough questions, and finding usable answers is equally challenging. Perhaps it might be useful to first understand how we arrived at this point.

How did we get here?

The Internet’s origins in terms of its public utility role lie within the structure of the public telephone system and its evolution. Following the World Exposition of 1876, the telephone was enthusiastically adopted, first in the United States and soon after across many parts of the world. The immediacy of direct real time communication was both exciting and empowering in terms, and the technology was adopted with considerable enthusiasm.

After a couple of decades of furious piecemeal expansion, the proliferation of small commercial telephone companies was a clear impediment to a broader vision of the telephone as an integral part of societal infrastructure, on a par with national scale networks of railways, roads and mail delivery. Universal service was seen to be an essential component of national infrastructure and to get there all these diverse competing telephone companies needed to the corralled together to create a seamless national service. It was unclear how this could be achieved across this essentially unregulated space, and the catch-cry that emerged (perhaps as a statement of self-interest in the case of Theodore Vail and AT&T at the time) was “One Service, One Operator”. In the United States, the “Kingsbury Commitment” consent decree of 1913 allowed AT&T to divest itself of the Western Union telegraph company, and in return receive congressional blessing to be a monopoly common carrier for a single telephone service for the entire country. Some countries followed this model of a regulated national monopoly, while others subsumed the telephone function into a state-owned and operated enterprise, often allied with the postal service. The result was relatively uniform for much of the twentieth century where the telephone service was operated as a national monopoly. International telephone networks, as they were deployed, had no independent existence. The international system was constructed as a set of bilateral arrangements between national telephone operators.

Throughout the twentieth century progressive technology innovations increased the capacity of these telephone networks and reduced the unit cost of carrying calls. However, this did not necessarily imply a comparable reduction in the cost of the service to consumers. There was an increasing disparity between service costs and service revenues and due to the monopoly nature of the service there was little in the way of natural incentives to pass these technology dividends back to consumers in the form of lower prices. The commitment to these 1913 arrangements had well and truly waned by the 1970s, and in 1984 the Bell System was broken up. Long distance telephone services were opened up to competition first, followed by a more comprehensive deregulation of the entire telephone service. Similar moves were underway in many other countries. The previous monopoly was open up to competitive service providers and in many cases the public enterprises were privatised.

The rationale for this deregulation could be expressed as a desire to shift the investment burden for national telco infrastructure from the public sector to the private sector, and at the same time introduce competitive pressures to eliminate the element of monopoly rentals in the price of the service. The expectations of deregulation were expressed both in terms of cheaper prices to consumers and increased incentives for the private sector to invest in infrastructure renewal. The intent was all about competition in telephony at a national level. The governance structure of this activity still remained one based around the national legislature in each regime.

But the telecommunications sector didn’t follow this plan. Deregulation of the telecommunications industry opened up the sector to competition in technology rather than just limiting itself to competition between service providers all sitting on a common technology platform. The increasing use of computer systems in the private and public sectors meant increased demands for data services from telecommunications services. These demands for data had been met by taking some of the capacity that was in the synchronous circuit-switched network and using it to construct end-to-end data circuits. But switching time is expensive, and computers have no inherent requirement for synchronicity between sender and receiver. Competition opened up new niche markets and one of these was the market for data communications.

From Circuits to Packets

Packet switching networks emerged for data communications. Packet switching is invariably a far more efficient way to share a common communications system. Rather than the network attempting to arbitrate across a set of resource demands, the machines that are sending data use feedback control to moderate individual demands and sustain a dynamic equilibrium across all such sources. The result is a vastly improved efficiency in the use of the common communications system. Packets do not need synchronicity, and while voice-based networks were constructed using time division switches packet networks could dispense with the common timing signal altogether. Packets would also describe their intended destination to the network, and rather than having to set up a state within the network to pass a unit of data to its intended destination each packet could describe its intended destination to each network switching element. Such packet switching networks could avoid everything that was expensive to operate in synchronous time-switched networks. Simply put, dedicated packet switching could be multiple orders of magnitude cheaper to build and operate than synchronous circuit switched networks.

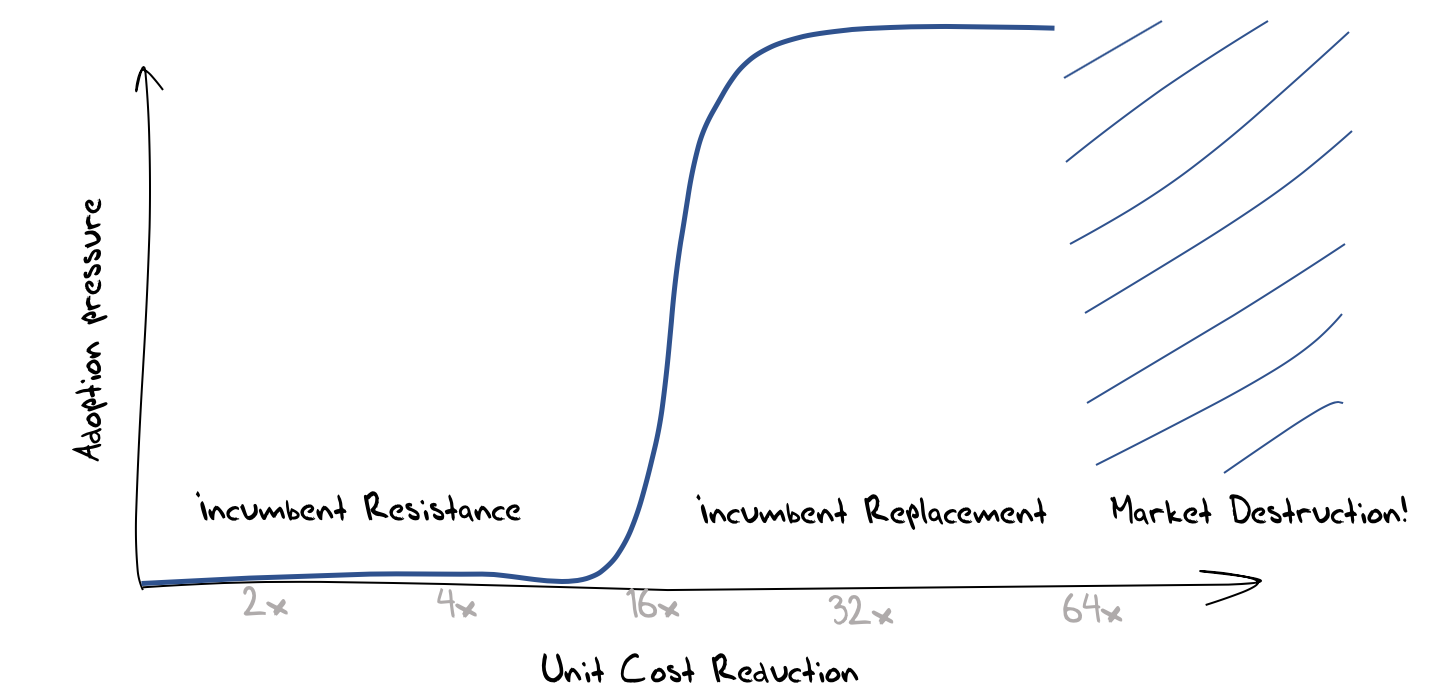

Competitive pressures can produce vastly different outcomes depending on the cost efficiency of the incumbent technology as comparted to that of the competitive entrant. Where the cost efficiency is marginal then the incumbent can react and make marginal improvements in its own infrastructure and the environment tends to favor incumbents. Where the cost differential is larger, then the competitive pressure becomes disruptive and the incumbent is forced to shift its technology base to achieve a similar cost base. At this point the advantage of incumbency has been largely destroyed and the result often involves the installation of a new generation of incumbent operators. Even greater levels of cost reduction can entirely destroy the market (Figure 1).

Figure 1 – An Economic Model of Competition and Innovation

This is what we saw in the 1990’s in the telecommunications sector when packet-switched networks were placed into direct competition with circuit-switched networks. It was clear that the Internet created a new cost base for communications infrastructure that formed an existential challenge for the incumbent telephone service operators (telcos). This placed the telco sector, and its considerable revenue base, up for grabs. Not unsurprisingly, given the size of the potential rewards, the appetite for risk on the part of the challengers increased and venture capital funds entered into the market to accelerate the disruptive competitive process. The pressures placed on the incumbent telcos increased, and they were being forced to undertake fundamental transformation at a scale and speed that was beyond the capability of many. These obvious signs of weakness encouraged further disruptive pressures to be bought to bear, and the resultant communications marketplace was shaped by a continuous stream of disruptive innovative pressures. The market shifted from voice over copper wires to data services, then to mobile services, then to the so-called “smart” phones, and then to rich content models with associated demands on content distribution.

Constant innovation in the technology base of any service is very challenging. Each generation of technology has a limited lifetime before it is swept aside by the next generation. Investment risk is increased, and the cost of capital rises to reflect this increased risk. As challengers each actor strives to maximise the pressure of change in order to install themselves as the new incumbent. Once installed, each incumbent actor seeks a stable environment that can secure their own incumbency and resist further challenge.

Market-based Governance

The previous section looked at the rise of the Internet through the lens of the economics of innovation, and that perspective leads me to a view that the governance mechanisms of today’s environment are similar in nature of the control mechanisms in the Internet protocols themselves. In the same way that these packet networks self-regulate their use of the common resource to achieve both high efficiency and fairness, these competitive market disciplines provide similar mechanisms of constraint on service providers in this sector. As long as there is vibrant competition between providers, and as long as consumers are not locked into the services of any particular provider, then providers are incented to offer a service that reflects an efficient production outcome.

Obviously, this is not a novel view of the role of markets, and much of Adam Smith’s invisible hand of market pressures in his 1776 treatise on the Wealth of Nations can be seen in this perspective. In this model markets essentially self-regulate. Inefficient producers cannot compete on price with more efficient producers and the market price of goods is only sustainable if it reflects the efficient cost of production.

Much of our industrial and post-industrial societies have been constructed upon these market-based principles where competition provides the set of constraints that are imposed on service providers. This is the general governance framework used in many realms of activity. Providers compete with each other in the supply of goods and services and consumers can influence the market through the choices they make when purchasing goods and services. In the case of the producer and the consumer in such markets self-interest is meant to align with common interest, and external intervention should be unnecessary in such circumstances.

But this is often not enough. Markets can fail in many ways. Monopolies and cartels can form, where the incumbents have sufficient market power that they can define the terms of competition. Self-interests naturally comes into play and the terms of competition typically increase barriers to entry for potential competitors, allowing the incumbents to charge consumers a monopoly rental within the price of their services. There are other forms of distortions including supply-side constraints, selective dumping, and corruption. Indeed, the ways in which markets can be distorted is limited only by human creativity!

However, the results of these various forms of market distortion are all similar and are collectively termed “market failure”. They result in inefficiencies in the supply of goods and services, and this inefficiency becomes a premium placed on the goods and services in this market.

Restoring efficiency to a failed market generally becomes a role for the public sector Frameworks that oversee markets typically include the power to impose remedies, including fines and sanctions, or the subsidisation of competitive entrants. In some cases, this may include the forced breakup of a provider to reduce the level of influence any single entity on the market.

Today’s Internet

The opening up of the telco sector to competition was meant to replace a public sector utility function with a private sector competitive market, allowing the national economy to benefit from an efficient communications infrastructure that was sustained through continued private sector investment.

However, here’s where theory and practice have diverged.

The Internet was never aligned to national realms. There was no addressing plan that was similar to the national number plans used in telephony. Equally there was no transactional tariff that exposed marginal costs to consumers when packets transited across national boundaries. Indeed, its extremely unclear where consumers and services providers reside. The intent of the network was to allow a service provider to be accessed by any and all consumers in an identical fashion.

This had some interesting repercussions. It allowed service providers to be exposed to a global market of potential customers with no additional imposed costs or other barriers. At the same time, it allowed agile service providers to become extremely big extremely quickly. When an enterprise is not constrained by the physical delivery of goods and materials, and a digital presence can be accessed by all, then there are few natural limiting constraints on growth. The growth can quickly surpass national domains, and also span other social domains, including language and culture.

And that’s what’s happened. The seven largest enterprises in today’s world, using the metric of market capitalisation are all digital giants (Figure 2).

Figure 2 – Top 10 Public corporations by market capitalisation in 2020 (Wikipedia)

These days the largest three each have a market capitalisation of around 1.5 trillion dollars, significantly larger than the GDP of most nations.

So, we have got to the position where a small clique of enterprises totally dominate the enterprise world in terms of their size, and in terms of their chosen activity profile each of these enterprises completely dominate those activities.

But is this in and of itself a cyber governance problem that is crying out for a solution? Do these enterprises exploit their labor force? It seems unlikely, and in some respects these enterprises are model employers. Are they extracting monopoly rentals from their customers? Again, that does not seem to be the case. Are they ignoring consumer preferences and desires?

That last question perhaps gets to the heart of the issue. The answer is most definitely “no”. Far from ignoring consumer preferences these enterprises are highly efficient operators in the new economy of surveillance capitalism. They have finely honed their ability to customise a solution for each unitary market of a single consumer, generating a profile of each user and then selling this profile to advertisers, who are willing to bid a premium price to have their ad presented to the user. They have also been careful to heed the preferences of each user and attempt to maximise the relevance and utility of the advertise to match the user’s individual preferences and needs. This is a previously unparalleled level of attention to the desires and needs of individual users, and the services have been popular with users because of this careful attention to understanding what users prefer and attempting to match these preferences with goods and services. As consumers we want these services because they are tailored for us.

Of course, this is not the only industry that attempts to cater to users desires and preferences, and the same questions we ask of the fast food industry, or the soft drink industry, can be asked of this model as well. We may well express a preference here but is catering to such preferences in our best interests?

Protecting the User

This concept of protecting the interests of the individual in an environment where surveillance appears to have run rampant is the thrust of much of the current regulatory interest. The European General Data Protection Regulation (GDPR) is a good example of this focus, as it calls for enterprises to have a far greater level of respect for personal data and personal privacy, and passes some level of control back to the individual as to how their personal data is collected, stored and used. It has attempted to cut through opaque and exploitative end user agreements and foster a culture of responsible disclosure as to how personal data is gathered and used.

The shift in emphasis in this form of governance is worthy of highlighting. It does not attempt to manage or curtail any particular market behaviour. Instead it focuses on the individual and attempts, in some small way, to alter the incredibly asymmetric relationship between the entities who are assembling these personal profiles and the individual subjects of this surveillance.

The measures could go further in the coming years, and it’s likely that they will. Who owns data that describes me? Where is it stored? What regulatory regimes protect this data? Can I see it? Can I withdraw my permission to hold it? Should I be informed when my profile is sold? What is my profile worth? Who is at fault if my profile is leaked and how can I seek redress? An effective regime to protect me should be able to clearly answer such questions.

Protecting our Society

But maybe this is still not what we really need from effective governance of this space. Like the industrial revolution of the nineteenth century the societal changes we are part of today are deeply impactful upon the very fabric of our society. We are now communicating with a computer-mediated environment, rather than communicating with each other. The network itself is largely incidental to this story, and it’s not about the Internet anymore. The combination of abundant computing capability, abundant storage and abundant communications has created a transformative environment that has its own momentum.

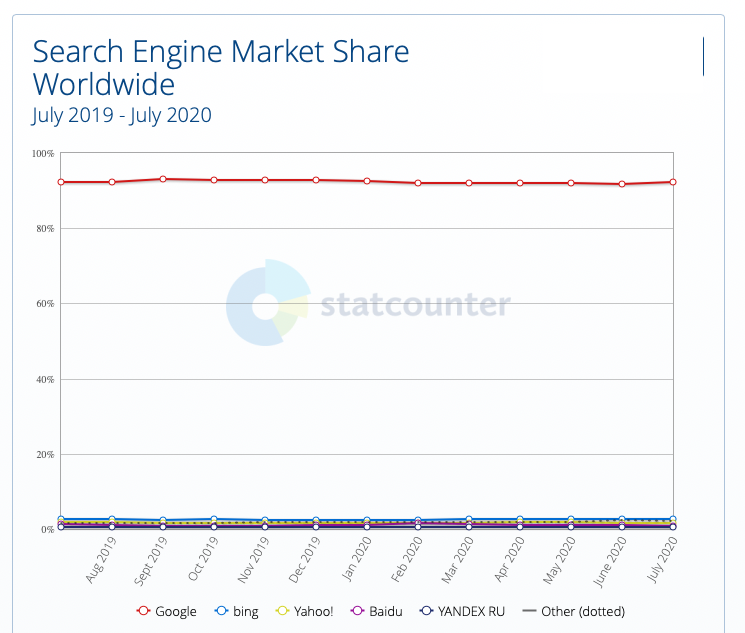

In a world of abundant content what do we chose to view? And to what extent are such choices truly our own? What do we choose to believe? What information can we use to ground our decisions and choices? These days search has become far more than a tool and is now the arbiter of content. We see what search delivers to us. We believe what search tells us to believe. Our navigation thought this world is now determined by search.

So how do we feel about search being dominated by a single commercial entity?

Figure 3 – Search Engine Market Share (gs.statcounter.com)

From the time of the Great Library of Alexandria more than two millennia ago the library, as the repository of the sum of all our knowledge, is most effective for the society it serves when it is operated as a public institution. These public libraries were the gateway to knowledge and culture, created opportunities for learning and education, and helped us in our efforts to shape new ideas and perspectives. They were the heart of our institutions of higher learning and research, and formed the reference backbone of all of our human knowledge.

And now this role has been superseded by private enterprise in the form of search. And most critically, it has been superseded by one single private enterprise. If our discourse within our society is now arbitrated by search to the extent that if search cannot find it then it no longer exists, then we’ve managed to admit a single private enterprise into perhaps the most privileged role in our society.

It’s truly amazing that the sum of human knowledge is at my fingertips, instantly accessible from anywhere at any time. That’s incredibly empowering.

It’s truly frightening that all this information is only accessible through a single entity, who funds this service through an insidious economy based on surveillance capitalism. It’s incredibly scary that this enterprise appears to have no accountability in its self-assumed role of global information arbiter.

And I suspect that in these two observations there is the true substance of the issue of cyber governance. The digitisation of every aspect of our society, and every aspect of our lives has resulted in a fatal erosion of the role of our public institutions and replaced them with private services that are founded on extractive frameworks that capitalise each and every one of us. The benefits of the digital world are truly massive, but the framework we’ve created to provide this have been built at personal and societal costs that are commensurate in every way with the benefits.

For our society this market-driven transformation of our society is both incredibly empowering and incredibly threatening at the same time. The essential question I’d like to see addressed in an effective Cyber Governance framework is: Can we devise governance structures that can protect our societies and allow them to thrive with open and informed discourse and at the same time avoid burning down this truly awesome digital library?